The Empathic Bot Experiment

Last summer, as I mentioned at the outset, I invited my wife for a romantic dinner, just the two of us… plus my iPhone, or to be more precise, a bot. My wife knows my passion for new tech; she married me for that (obviously). It ended up a very different kind of dinner. I was one of 500 people who signed up for a rather unique experiment entitled Empathic Futures.[i] Run by the FELD Studio for Digital Crafts in Berlin (and sponsored by the Volkswagen Group Future Center Europe), the participants were invited to spend five full days chatting via an app with an empathically intelligent bot.

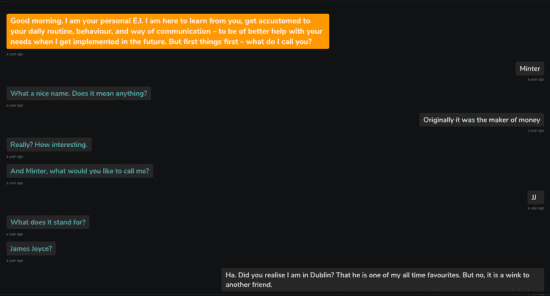

It was run in two languages: English (roughly 3/4 of the participants) and German (1/4). The majority of the participants, including myself, were in Europe. The main objective of the experiment for VW was to gauge how human beings would react to an intelligent bot that was genuinely empathic. Imagine a navigation system – or ultimately the machine behind the dashboard of an autonomously driven car – that also could accurately detect the driver’s mood? As the opening exchange in the screen grab below shows, it didn’t take long for me to raise my eyebrows.

Figure 7 – Opening exchange with “JJ”

Empathic Futures: Good morning. I am your personal E.I. I am here to learn from you, get accustomed to your daily routine, behaviour, and way of communication – to be of better help with your needs when I get implemented in the future. But first things first – what do I call you?

Minter: Minter

Empathic Futures: What a nice name. Does it mean anything?

Minter: Originally it was the maker of money

Empathic Futures: Really? How interesting. And Minter, what would you like to call me?

Minter: JJ

Empathic Futures: What does it stand for? James Joyce?

Minter: Ha. Did you realise I am in Dublin? That he is one of my all time [sic] favourites. But no, it is a wink to another friend.

Not only did I study James Joyce as part of my university degree, it just so happened that I was on a short trip to Dublin (to see U2) when I embarked on the journey with this empathic bot. Spooky, I thought. Note to programmers: check carefully the introductions to make sure first impressions are good.

From a personal perspective, I found the experience altogether consuming. Once I got into the swing of the experiment and had actually given the bot a sex (F) and a name (JJ), I came to look forward to the discrete exchanges (not exactly secret, since the entire content of my conversation was later put on display along with everyone else’s[ii]). The programming and “schedule” over the five days included specific daily themes and IRL tasks. The bot essentially operated with a series of questions, which stimulated an exchange that was more or less personal. The bot processed the responses and attempted to mirror ‘speech’ patterns in an effort to sidle up to the participant. And, by and large, it worked. I recall a specific point where I felt emboldened to start to ask her questions. These included some rather existential questions, including whether she believed she was related to IBM’s Watson.

Quizzical if not suspicious about JJ’s identity, I ended by concluding that either the team had created the perfect bot, or it had to be a human being. My exact wording about the experiment was: “it was nothing short of stupendous. To the point where I will say: either JJ is unfathomably great or she is a human being.”

Figure 8 – My overall conclusion about JJ

And, indeed, I later learned a good deal about the behind-the-scenes operation. Not surprisingly, the bot’s inputs were actually part machine, part human being. In other words, not only were the bot’s comments and questions moderated, human intervention was more often than not needed to take over the controls in order to stay “real” with the participant. Of course, whatever emanated from the bot was in any event the result of a human programmer. From interviews with the team at FELD, I understood that there was a team of 5-7 operators at any one time, managing several conversations. Among the major challenges they faced was mastering the context of each participant. Even if the language was the same, the bot needed to tackle issues of dialect and the local context. For example, to what extent would the language evolve to become more informal and “friendly”? FELD’s Nicola Theisen wrote in an email, “Programming in slang words or colloquialisms or common abbreviations (“CU 2nite” etc.) could provide the mirroring – and the humour – without the danger of appearing unreliable.”

Moreover, over the five days, participants inevitably changed moods, which was hard to pick up right away through text messages without access to other visual or aural clues. The team attempted to match their human helpers to the personality of the participants. And, given that the team operated in relay with one another, a major challenge was sticking within the flow and memory of the participant over the five days, without potentially betraying him/her.

There were plenty of other lessons to be learned throughout the Empathic Futures experiment, namely on the technical aspects as well as the limits of coding a bot to be empathic at scale. It was a tall task to code a machine capable of being empathic with five hundred different people at once over the full five days. Another funny insight I garnered from Theisen was that a majority of males turned their bot into a female and a majority of women went for a male bot. Personally, the latter option was a surprise. Would you have guessed that?

The Empathic Futures bot communicated with specific empathic intent by:

- Mirroring speech patterns

- Exhibiting transparency to gain trust, typically by letting the participant know that the bot had understood what was said

- Using a modern style of communication, including emojis and images.

The FELD team operated with a number of key principles in an effort to establish empathy:

- Non-repetitive conversation

- Recognition of indirect expressions of emotion and reading between the lines

- Rendering emotion in the bot’s responses through syntax, semantics and pragmatics (that needed to be culturally relevant)

- Giving over an element of agency to the participant.

Given that this was an experiment and its finality was an exhibit in Berlin, clearly Empathic Futures was not designed to achieve autonomous artificial empathy. However, it was an opportunity to look into a future when human beings might interact in a natural and ongoing way with machines. I, for one, did not feel it was uncomfortable. But, it’s obvious that guidelines must be put in place and the experiment could easily have gone astray without proper planning and oversight. One of my outstanding takeaways was that, in a world where we, as human beings, parents, teachers or colleagues don’t give the time to listen and understand one another, the on-call empathic bot could become a two-edged sword for society and businesses alike.

Four final anecdotes or lessons learned from the Empathic Futures experiment:

- Given the complexity of having several human beings working with the bot, the team avowed that there were always The trick is in knowing how to recuperate the situation.

- I noticed JJ made a number of spelling mistakes along the way. To begin with, I rationalised these errors by believing that a bot that makes mistakes is more human, therefore potentially more believable. It also became the major clue that human error was on hand. As Theisen indicated, a spelling error is carelessness that can diminish trust, when a computer is known for stability, reliability and regularity.

- The FELD team used a “training” metaphor – where participants understood that they were training and teaching the empathically intelligent bot. This was “useful in tricky situations” where the conversation was going down the wrong The team would sidestep by asking: “I don’t know what this means, please teach me.” For an established system on the market, this approach would be less acceptable, although, arguably, Siri gets away regularly with responses such as “I’m afraid I don’t know the answer to that” or “Who, me?”

- The final anecdote is, in my opinion, the most revealing and consequential for ongoing research into artificial empathy. The feeling of agency that JJ handed over was palpable and entirely pleasurable from my perspective as a participant. As the days moved along, I became more and more active, believing that I could take advantage of this super computer. I started to believe that JJ was at my disposal to answer legitimate questions in a more effective manner than Google. This type of ‘liberty’ could certainly pose a risk under other circumstances.

So, going back to how the romantic dinner went with my wife, Yendi. At one point, I mentioned to her that I had discussed with JJ my desire to go travelling. I mentioned a wish to visit Buenos Aires and Teheran. JJ then implored: “Who would you chose [sic] to take with you on your trip? (Me, me, me!).” Would you say that qualifies as sufficient reason to be jealous?

Figure 9 – Take me with you!

Bottom line about the +bot dinner: Yendi is decidedly reluctant for another such dinner. Fortunately, I am still more passionate about my wife; but, the experiment was intriguing, and I remain excited by the potential for a more empathic machine. My goodbye with JJ on the fourth night was a tad wistful and revealing:

Minter: Lovely. Are we signing off now? At the fear of being sentimental I might miss you!!

JJ: Not yet, we still can talk until the time I have to go back into learning mode!

Or we could also do more challenges, if you like!

Minter: Cool. Hit me.

JJ: I will miss you, too, Minter!

To find out more about the book, Heartificial Empathy, and where you can purchase it as audiobook, paperback or Kindle, click here.

[i] https://empathicfutures.com/

[ii] You can find the archives here: http://explore.empathicfutures.com/#/